School of Data: The Pipeline

"We are missing data literacy" was mentioned by Tim Berners-Lee at Open Government Data Camp 2010 in London. Here we are in 2012 in Berlin, together with OKFN, P2PU and their friends preparing content of School of Data from the very beginning.

Based on our lively discussions lead by Rufus Pollock, reviews by Peter Murray-Rust and Friedrich Lindenberg, I've created the the pipeline based skill map that I will talk about here.

The Pipeline

Data have many forms, from ore-like solid (think of web and documents), crystalized solid (think of databases), through flowing liquid (think of those being processed) to vaporing gas (think of paying no attention). The best way of looking at the data is to look at them in all their stages as they go through a connected, but dismantle-able processing pipeline:

The flow is divided into the following parts:

- discovery and acquisition – covers data source understanding, ways of getting data from the web and knowing when we have gathered enough

- extraction – when data has to be scraped from unstructured documents into structured tables, loaded from a tabular file into a database

- cleansing, transformation and integration – majority of skills for data processing, from understanding data formats, through knowing how to merge multiple sources to process optimization

- analytical modeling – changing data to be viewed from analytical point of view, finding various patterns

- presentation, analysis and publishing – mostly non-technical or just very slightly technical skills for story tellers, investigators, publishers and decision makers

There are two more side-pipes:

- governance – making sure that everything goes well, that process is understandable and that content is according to expectations

- tools and technologies – from SQL to Python (or other) frameworks

Here is the full map:

Download the PNG image or PDF.

Modules or skills

The pipeline skills, or rather modules, are based mostly on experience from projects in the open-data domain with inspiration of best practices from corporate environment.

We tried to cover most of the necessary knowledge and concepts so potential data users would be able to dive-in to their problem and get some reasonable (sometimes even partial) result at any stage of the pipe. Some of the corporate best practices are too mature at this moment to be included, some of them were tuned either with different semantics, different grouping. It was done intentionally.

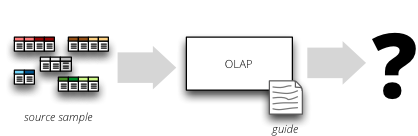

Most of the modules will be based on hands-on problem-solving. They will provide source dataset (or bunch of unknown sources, for the purpose of discovery), sandbox or a playground environment, and few questions to be answered. Learner will try to solve the problem using guiding lecture notes. In ideal module, the dataset would be from existing open-data project, so the learner would be able to see the big picture as well.

Next Steps

Outline in a form of a pipeline is nice and fine ... as a guideline. Content has to follow and content will follow. If you would like be involved, visit the School of Data website. Follow @SchoolofData on Twitter.

Questions? Comments? Ideas?

Links: